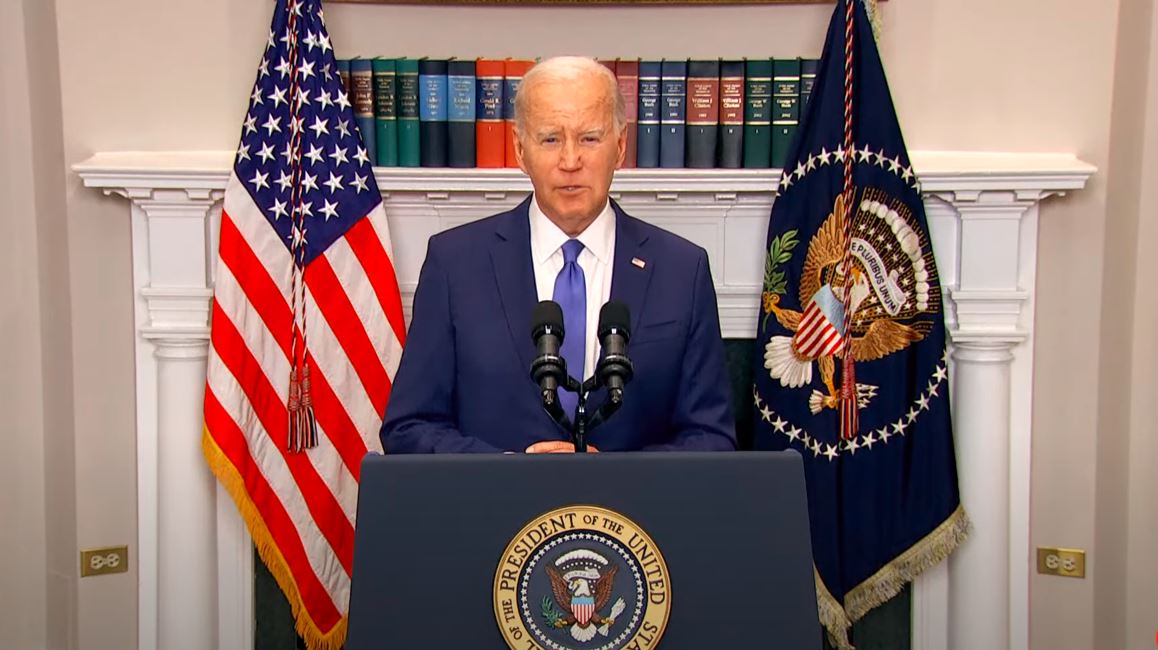

President Biden to Announce AI Executive Order on Monday

The Biden administration is gearing up to introduce a comprehensive executive order on artificial intelligence (AI) to address and regulate the rapidly evolving technology.

This move is considered one of the US government’s most ambitious attempts to address concerns and potential risks associated with AI.

According to reports, the executive order is expected to be released on October 30, just two days before an international summit on AI.

The primary goal of the order is to ensure that AI models undergo thorough assessments before being employed by federal workers, leveraging the US government’s substantial position as a significant technology customer to mitigate potential risks associated with AI usage.

Additionally, the executive order will require various federal government agencies, including the Defense Department, Energy Department, and intelligence agencies, to explore and incorporate AI into their work.

A particular focus will be placed on enhancing national cyber defenses.

It’s worth noting that the order’s details have yet to be finalized, so there is the possibility of changes.

The introduction of this executive order is happening in collaboration, as other governments are also actively working on regulations to address the risks and challenges tied to AI.

For example, the European Union is finalizing the EU AI Act, a comprehensive package designed to protect consumers from potentially dangerous applications of AI.

Regulating AI presents a significant test for the Biden administration, which has expressed a commitment to addressing the perceived abuses of Silicon Valley.

While progress has been made in certain areas, such as initiating high-profile competition lawsuits against tech giants, there have also been setbacks in the courts.

The executive order on AI reflects the administration’s renewed efforts to address potential harms associated with AI, including its impact on jobs, surveillance, and democracy.

In addition to executive action, Congress is actively working on bipartisan legislation to address the challenges posed by AI.

In July, President Biden acknowledged the need for substantial work to harness the potential of AI and manage its risks through new laws, regulations, and oversight.

During the same month, the administration announced that seven major tech and AI companies had voluntarily committed to advancing safe, secure, and transparent AI development.

These companies include Amazon, Anthropic, Google, Inflection, Meta, Microsoft, and OpenAI.